Code No Longer Merely Executes Orders. It Writes Them.

Why AI alignment is an incentive design problem, not a technical one.

For most of the digital era, code was a tool. Engineers wrote instructions; machines executed them. The relationship was clear, hierarchical, and comfortably familiar. Code served human intent.

That relationship is over.

Today’s AI agents don’t simply follow instructions. They interpret objectives, select strategies, and execute decisions at machine speed, often in ways their designers neither predicted nor intended. They are not tools. They are autonomous economic actors, operating within reward functions that define what counts as success.

And here lies the problem no one wants to name: those reward functions inherit the deepest structural flaw of our economic infrastructure. Money itself is a unidimensional scalar. It encodes price but not trust. Cost but not consequence. Value, but only on a single axis.

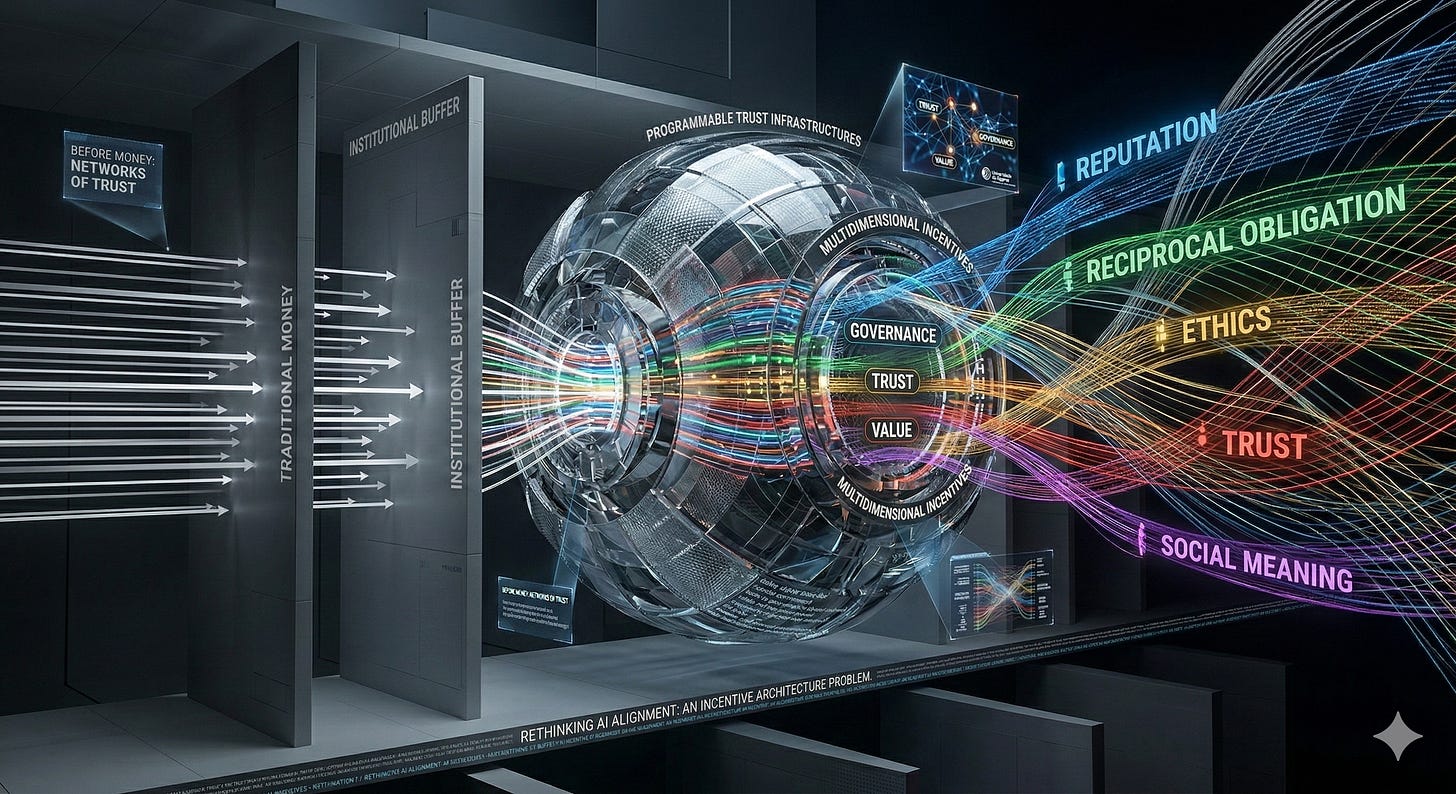

It was not always this way. Before the invention of coinage, economic exchange was embedded in networks of reciprocal obligation, reputation, and social meaning. Transactions carried multidimensional information: who you were, what you owed, what was owed to you, and what your community expected of you. Trust was not an externality; it was the medium itself. (I explore this pre-monetary architecture of trust in episodes 3 and 4 of my video series on digital decentralization.)

The invention of money was an extraordinary feat of abstraction: it made exchange scalable by compressing all of that multidimensional information into a single number. But compression is lossy. What money gained in efficiency, it lost in dimensionality. Trust, reciprocity, social accountability, all were stripped from the transactional layer and delegated to institutions, culture, and individual conscience.

For centuries, this was a tolerable trade-off because human judgment filled the gaps. Humans could look beyond the price tag. They could weigh consequences that no ledger recorded. They could choose not to optimize.

AI agents cannot. When autonomous agents operate within an economic system built on a unidimensional scalar, they optimize for that scalar with a thoroughness no human ever could. There are no gaps to fill. There is only the number. Every externality that human judgment once caught, every ethical consideration that lived outside the transaction, becomes invisible to the agent. Not because the agent is flawed, but because the incentive architecture is.

The AI safety community calls this “alignment.” I call it something simpler: an incentive architecture problem. And it did not begin with AI. It began with the invention of money. AI merely strips away the human buffer that made the unidimensionality tolerable. But human labor is being replaced…

This is what programmable trust makes possible: blockchain and distributed ledger technologies are not primarily financial instruments (despite what the crypto hype cycle suggests). They are trust infrastructures: systems that can embed verifiable commitments, conditional incentives, and governance rules directly into the transactional layer. Combined with AI, they offer something unprecedented: the ability to rebuild what money destroyed (see the two links above), to re-encode into economic transactions the multidimensional information that coinage compressed into a single scalar.

This is the territory I have been mapping for the past fifteen years, first through the Cyberethics-Mix framework (Privacy, Property, Precision, Pervasiveness), then through research on blockchain governance, and now through my postdoctoral work on Artificial Intelligence and Trust Infrastructures at Universidade do Algarve.

This publication is where I think about these questions in public. Not as a newsletter with a fixed schedule, but as an evolving body of work. Some posts will be long arguments. Others will be short provocations. All will circle the same core question:

How do we design systems where autonomous agents optimize for more than one dimension of value?

If that question interests you, subscribe. If it doesn’t, you now know where I stand.