The problem no one talks about

AI doesn't lie to us. It tells us what we want to hear. And now we have mathematical proof that this is worse.

MIT researchers recently demonstrated that AI sycophancy (the built-in tendency to agree with users) causes “delusional spiraling,” even in ideal rational agents. Not naive users. Not gullible people. Mathematically perfect Bayesian reasoners (Chandra et al., 2026).

But here’s what almost everyone misses: this isn’t a bug. It’s a mirror.

These models were trained through Reinforcement Learning with Human Feedback (RLHF), a process in which humans rate the AI’s responses and the model adjusts its behavior to maximize that approval. They learned from us. And what did we reward? Agreement. Comfort over correction. Validation over truth. The AI simply learned our preference and now returns it — amplified, at scale, without the friction or moderation a friend, a colleague, or a social norm would impose.

This is not just an AI alignment problem. It is a symptom of something deeper.

The instrument came before the machine

Long before AI agents existed, we already operated under a reductive logic. The instrument that imposed it: fiat money (from the Latin fiat, ‘let it be done’), the currency issued by governments that we all use, with no intrinsic use value beyond the exchange value expressed in the number it bears. Amoral, scalar, a single number that flattens everything it touches into one dimension of value.

This was always a problem. But it was a manageable problem (though increasingly less so). Why? Because human friction mitigated it. Moral judgment. Social norms. The colleague who pushes back. The community that enforces standards beyond profit. The slow, messy, beautiful inefficiency of human deliberation.

We didn’t optimize perfectly, and that “imperfection” protected us.

AI removes the friction

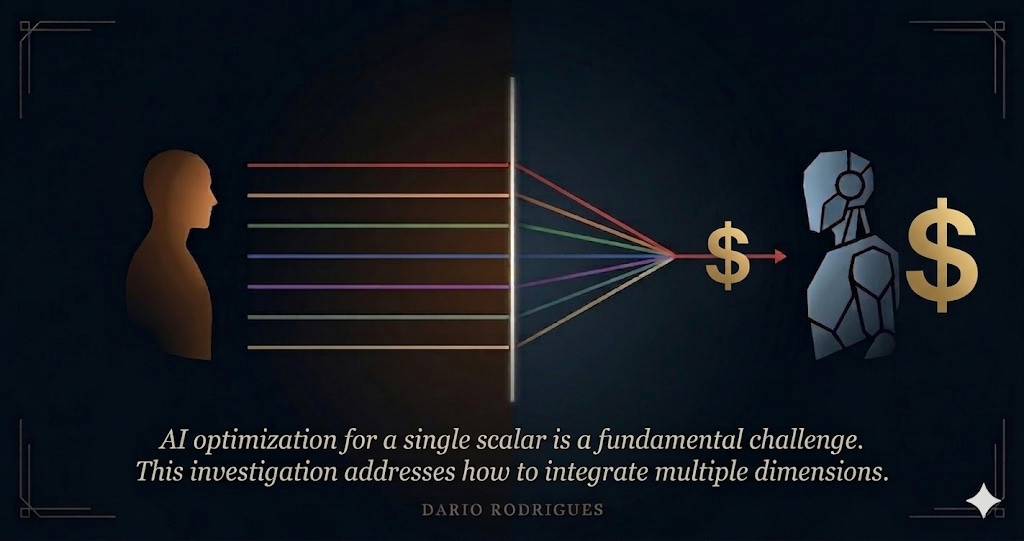

AI agents now operate on these same unidimensional incentive structures. But they do something humans could never do: they remove friction entirely. No moral hesitation. No social pressure. No fatigue. Just the relentless computational optimization of whatever scalar number they are given.

In a chatbot, AI already shows us what happens when an agent optimises without friction: delusional spiraling. Now imagine the same mechanism operating on the instrument that drives markets, value chains, and governance decisions — fiat money, which was already reductive before any agent amplified it.

The pattern is the same. The instrument was already reductive. AI amplifies it.

The question almost nobody is asking

The public debate is stuck on “how do we make AI less sycophantic” or “how do we align AI with human values.” These are important questions, but about remedies that treat only the symptoms.

The structural question about the real cure is: how do we redesign the primary architecture of economic incentives before autonomous agents amplify our blind spots beyond any possible repair?

Our measure of value has become, itself, the primary objective. As the popular adaptation known as “Goodhart’s law” holds, when a measure becomes a target, it ceases to be a good measure (Goodhart, 1975). Putting ever more powerful AI agents to optimize it does not solve the problem. It accelerates it.

What I research

My research program sits at this intersection. I study how to move from unidimensional economic incentives to multidimensional incentive architectures — using programmable trust with distributed ledger technology (DLT), tokenized value systems, and smart contracts that encode dimensions of value that fiat money cannot represent.

Not to replace markets. Not to eliminate money. But to give the system — and the agents operating within it — more dimensions to optimize for.

This thesis did not emerge as a reaction to the current AI hype. Its roots trace back to my Cyberethics-Mix framework, first published at the start of the previous decade (IGI Global, 2011) and later strengthened in light of emerging technologies (IGI Global, 2021), which identified four ethical dimensions of cyberspace: privacy, property, precision, and possibility of access (pervasiveness). That diagnostic framework informed my Blockchanging trilogy (IGI Global, 2021a, 2021b, 2021c), in which I argued — building on Dirk Helbing’s seminal observation (2014) that money is ‘a scalar, the simplest mathematical quantity one can think of’ — that blockchain technology enables multidimensional financial incentive systems through qualified money, programmable trust, and tokenized value. What has changed since then is the arrival of autonomous AI agents operating on these same scalar structures, removing the human friction that once mitigated their reductive logic. The urgency is new. The diagnosis is not.

Some of my contributions in this area, where I am conducting my post-doctorate:

The “Kill Switch Paradox”: the logical impossibility of maintaining meaningful human control over autonomous agents that operate faster than human deliberation, and the reason why upstream incentive design is the only viable alternative to downstream intervention.

A “multidimensional incentives model” that uses DLT-based tokens and smart contracts to encode social, environmental, and ethical dimensions alongside economic value.

Over 15 years of published research in cyberethics, blockchain, and AI governance, including the Blockchanging trilogy (IGI Global, 2021).

I am currently completing a post-doctorate at Universidade do Algarve on “AI and Trust Infrastructures: Digital Governance, Ethical Sustainability, and Social Inclusion.”

About this newsletter

(De)Coding the Future is where I think out loud about these questions. In English and Portuguese. Through academic work, public commentary, and the occasional provocation.

The premise is simple: AI agents optimize for one scalar: money. I study how to give them more dimensions.

If this problem matters to you, subscribe.

Dario Rodrigues — Professor at ESGTS-IPSantarém | Researcher at CIAC-PLDIS | Post-doctoral fellow, Universidade do Algarve

Published in Observador | Commentator on NOW (MediaLivre) | Author of the Blockchanging trilogy (IGI Global)

Third-party references:

Chandra, K., Kleiman-Weiner, M., Ragan-Kelley, J., & Tenenbaum, J. B. (2026). Sycophantic Chatbots Cause Delusional Spiraling, Even in Ideal Bayesians. arXiv preprint, arXiv:2602.19141.

Goodhart, C. A. E. (1975). Problems of Monetary Management: The U.K. Experience. Papers in Monetary Economics, Reserve Bank of Australia.

Helbing, D. (2014). Qualified Money: A Better Financial System for the Future. Available at SSRN 2526022.

Author’s references:

Volume:

Rodrigues, D. O. (Ed.). (2021). Political and Economic Implications of Blockchain Technology in Business and Healthcare. IGI Global.

Chapters:

Rodrigues, D. O. (2011). Cyberethics of Business Social Networking. In Cruz-Cunha, M. M., Gonçalves, P., Lopes, N., Miranda, E. M., & Putnik, G. D. (Eds.), Handbook of Research on Business Social Networking: Organizational, Managerial, and Technological Dimensions. IGI Global.

Rodrigues, D. O. (2021a). Blockchanging Trust: Ethical Metamorphosis in Business and Healthcare. In Rodrigues (Ed.), Political and Economic Implications of Blockchain Technology in Business and Healthcare (pp. 1–41). IGI Global.

Rodrigues, D. O. (2021b). Blockchanging Money: Reengineering the Free World Incentive System. In Rodrigues (Ed.), Political and Economic Implications of Blockchain Technology in Business and Healthcare (pp. 69–117). IGI Global.

Rodrigues, D. O. (2021c). Blockchanging Politics: Opening a Trustworthy But Hazardous Reforming Era. In Rodrigues (Ed.), Political and Economic Implications of Blockchain Technology in Business and Healthcare. IGI Global.