Trust in the Age of AI Is Not a Feeling; It Is an Infrastructure

When two adversaries do not trust each other, the solution is not to ask for trust. It is to build a mechanism that makes it unnecessary.

The same company. Two attacks. One weekend.

On a Sunday, three Chinese AI laboratories used 24,000 fake accounts to ask Claude 16 million questions, systematically extracting its most advanced capabilities to train rival models. On Tuesday, the U.S. Secretary of Defense summoned Anthropic’s CEO to the Pentagon with a 72-hour ultimatum: remove all restrictions on military use, or lose a $200 million contract and face sanctions severe enough to destroy the business.

The temptation is to pick a side. The responsible company against the reckless general. The pragmatic military against the idealist CEO. Neither version is true, and neither solves anything.

The real driver of this crisis is not bad faith. It is the absence of any mechanism to verify good faith. The Pentagon cannot prove to Anthropic that it will respect agreed limits. Anthropic cannot verify compliance without accessing classified operations. Each side, acting rationally within its own constraints, deepens the other’s suspicion. A cycle in which everyone loses, but no one has an incentive to change alone.

This is not a new problem. During the Cold War, the United States and the Soviet Union signed and honoured nuclear arms treaties not because they trusted each other, but because verification mechanisms made trust unnecessary. Inspectors, satellites, independent reports. Neither side believed in the other’s goodwill. Both believed in the mathematics of the instruments.

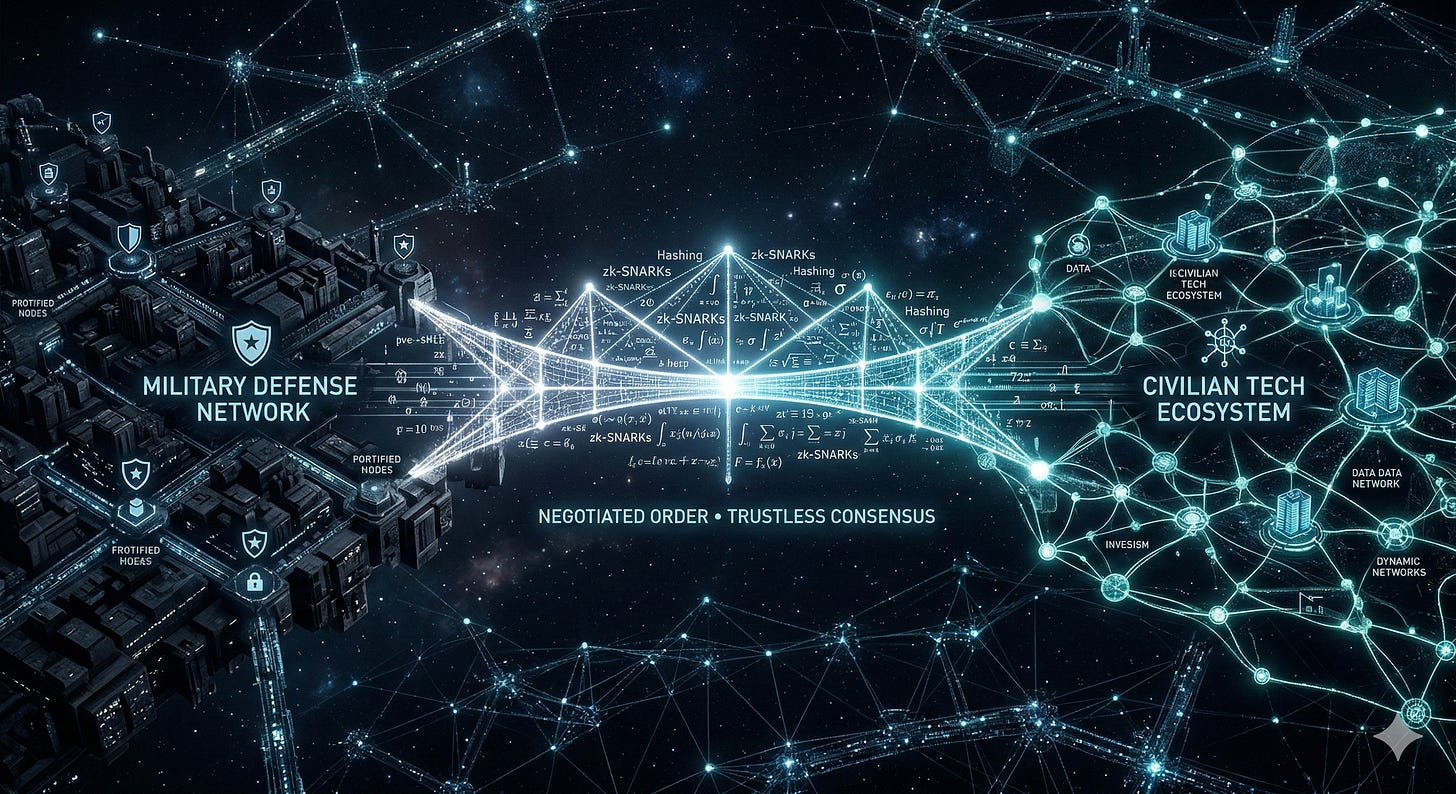

The same logic applies here. Cryptographic techniques already used in financial systems and digital identity, specifically zero-knowledge proofs, allow one party to prove to another that an agreed condition has been met, without revealing anything beyond that fact. The Pentagon could demonstrate that it used Claude within agreed parameters without disclosing a single operation. Anthropic could verify this without accessing any classified information. Certainty instead of suspicion. Mathematical proof instead of a political ultimatum.

The barrier is not technical. It is political. Verification requires each party to accept being verifiable, which means sharing power. Governments accustomed to operating without scrutiny on national security matters resist. Companies accustomed to setting unilateral terms of use resist, too.

Meanwhile, no autocratic adversary has lost a single day debating ethical limits. That asymmetry is, in itself, the most urgent argument for building what is missing: not better models, not faster adoption, but infrastructure that makes distrust unnecessary.

That, paradoxically, is an advantage only open societies can build. It requires transparency, mutual verification, and institutions willing to be scrutinised. Autocracies can build powerful AI. What they cannot build is verifiable trust.

That is the real competitive advantage of democracies in the age of artificial intelligence, if they have the clarity to use it.

(This post expands on an article published today in Observador, Portugal’s leading online newspaper. The full piece, in Portuguese, is available here.)